How to Use Your Higher Education Data to Boost Enrollments

Higher education analytics are an essential aspect of enrollment marketing. University marketers can leverage data discoveries to make more informed decisions on a day-to-day basis.

On a foundational level, university marketing departments collect vast amounts of data, including website visitors’ behavior (time on site, number of page views, etc.), but data points may not give enough context to inform decisions. The next stage of analytics is information, which provides a more digestible means of understanding a marketing campaign’s performance (total sessions, average conversion rate, etc.). Information is a collection of relevant data that can be useful in terms of making adjustments and optimizations for marketing efforts.

The final and most useful aspect of analytics is knowledge, which provides clear-cut concepts or assertions that have been proved over time (e.g., the best month for higher education marketing results is January, while the worst month is December).

Once the analytics team has the right people and technology in place, it can collect data, analyze the information available, and even provide valuable knowledge to your department and institution. The value gained from the ability to determine and act on actionable insights is critical. As more and more universities move to OPMs, now is the time to ensure you are one step ahead of your competitors when it comes to making data-driven decisions.

Challenges With Analytics in Higher Education

Enrollment marketing budgets aren’t unlimited, and you may not have the means to track every enrollment effort at every phase. Beyond that, you may not have the budget to hire data analysis experts. To make the most of the dollars you do have, test and learn with existing resources and focus on the data you can track. Plenty of apps and platforms offer some form of free tracking for media posted on their channels, including Instagram and Google.

If you do have the funds, but you’re still struggling, your challenges may be related to infrastructure. A 2021 report by APLU describes one major issue for today’s institutions: lack of data integration. Universities largely collect and analyze departmental data in separate systems, and these data silos aren’t allowing users to draw larger conclusions. Without a solid data governance strategy, trends are less likely to get recognized in an impactful way. Having a dedicated team to ensure the right technology and data processes are in place allows for much smoother use of information, equipping team members to access and utilize data analytics more efficiently.

To find success in your enrollment marketing strategy, use as much automation as you can afford, set up the right teams for governance and analysis, and work smarter with the data your work provides.

4 Higher Education Analytics Strategies

At Archer Education, we keep several key tactics in mind when supporting our clients in building a higher education analytics strategy that works.

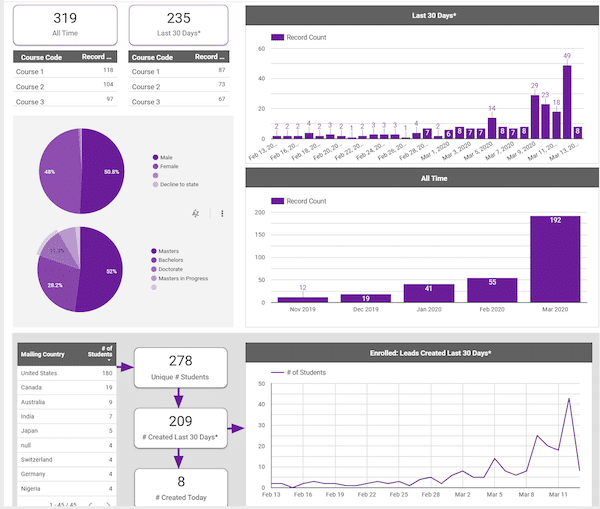

1. Create a Dashboard That Displays All of Your Funnel Data

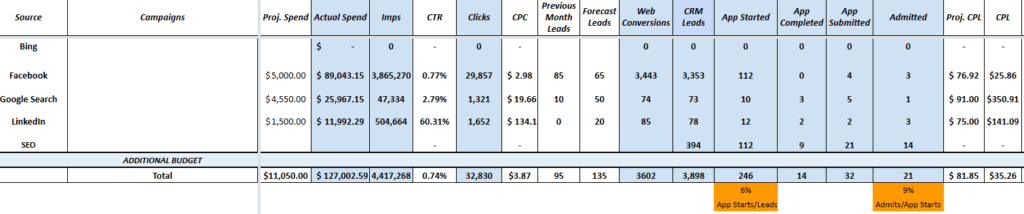

Have you been asked to create analytics reports for your student enrollment marketing team? If yes, you know how important it is to create an analytics report that gets to the core performance metrics that actually matter. As higher education marketers, we know that leads are just the starting point — the top of the enrollment funnel. Generating thousands of leads who never start their applications, let alone submit them, is just a waste of your marketing budget.

Have you been asked to create analytics reports for your student enrollment marketing team? If yes, you know how important it is to create an analytics report that gets to the core performance metrics that actually matter. As higher education marketers, we know that leads are just the starting point — the top of the enrollment funnel. Generating thousands of leads who never start their applications, let alone submit them, is just a waste of your marketing budget.

Have you been asked to create analytics reports for your student enrollment marketing team? If yes, you know how important it is to create a higher education analytics report that gets to the core performance metrics that actually matter. As higher education marketers, we know that leads are just the starting point — the top of the enrollment funnel. Generating thousands of leads for prospects who never start their applications, let alone submit them, is just a waste of your marketing budget.

The student enrollment funnel starts with leads at the top. Then, your nurturing pay-per-click (PPC) strategies will push them down to start their applications, and the enrollment advisors should help them to submit and complete their applications. Nevertheless, your leads need to actually enroll in the program in order for your marketing team to celebrate its success.

Your marketing analytics dashboard must align with the above funnel segments. You can easily export leads to your dashboard directly from Google Analytics or from the marketing platform itself, but you need to make sure you’re filtering out the duplicates.

To deduplicate your leads report, you need a daily pass-back file from your enrollment customer relationship management (CRM) system. CRMs not only deduplicate your lead profiles but also report on each lead’s application activity. With proper URL tagging, you can tie each lead to the referring marketing channel. Normally, a CSV file export of your CRM data will provide you with accurate lead data, along with the application and enrollment status for each lead.

2. Use Behavior Flow to Guide Your Content Strategy

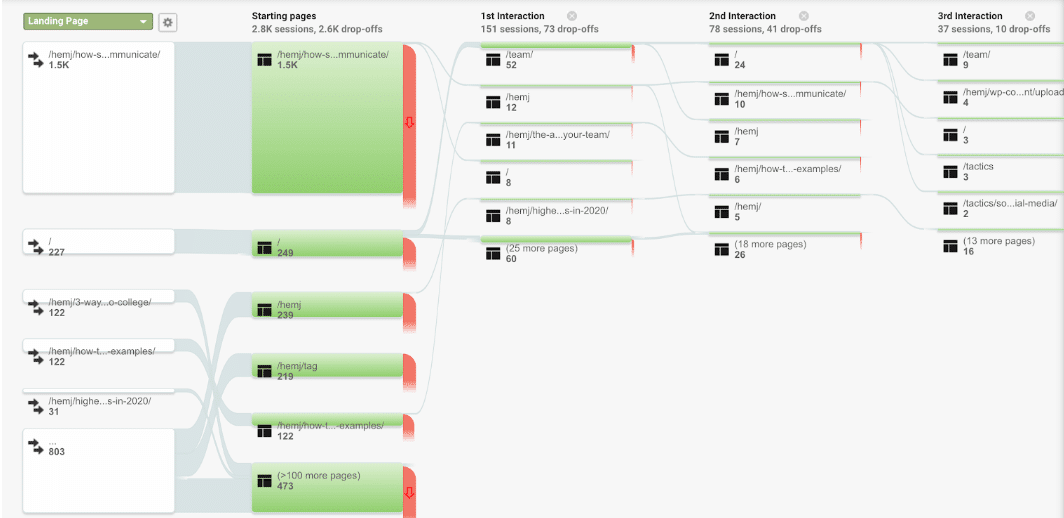

Understanding a university’s website traffic can be challenging, as university websites have multiple target audiences and stakeholders across the board. Identifying the prospective-student user flow can help you understand your target audience’s needs. To that end, the “Behavior Flow” report in Google Analytics allows you to analyze traffic and look at user navigation through the site.

This report is a gold mine for content and web strategists trying to understand what kind of content resonates with your audience. It also provides insight into challenges a university site may have with user experience and content depth. For example, a user lands on an admissions page but uses site search during their second or third interaction on the site. This type of behavior suggests the user either is having a hard time navigating your site or is simply not finding what they’re looking for.

Furthermore, “Behavior Flow” allows you to look at key user movements. For example, a user moving from a program page to a faculty bio page can be indicative of a prospective student. User navigation to curriculum pages or admissions pages is also an indicator of prospective-student behavior. Understanding a prospective student’s needs helps higher education marketers better position their programs and drive more users into the student enrollment funnel.

3. Look at How Exogenous Variables Impact Enrollment

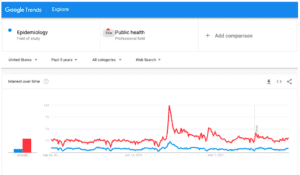

The ability to understand and analyze your university or program’s marketing strengths and opportunities is important, but the impact of exogenous variables on the performance of the university, college, and/or program often gets overlooked. For example, a struggling economy or a strong growth market can lead to strains on enrollment marketing (higher education tends to be counter-cyclical to the market, so recessions seem to show the best results for our industry).

Other external factors beyond your control may also be at play. Besides economic ebbs and flows, there could be fields of study that may be hot one year but not so much the next. For example, during the onset of the coronavirus pandemic, we saw a significant growth in interest in epidemiology and public health programs.

4. Answer the Questions That Will Direct Your Retargeting Strategy

Analytics are the foundation for any solid growth strategy. Whether it be identifying how, what, or where to find leads or detailing the journey through the enrollment process, a targeted approach to success isn’t possible without an analytics strategy in place. Fundamentally, analytics are not a mere collection of numbers; they’re a story.

Who is my audience? What are their online habits? How quickly do they perform an action? By collecting information in terms of what types of questions need to be answered, you can start to outline where these answers should come from. Upon compiling appropriate datasets, you can introduce visualizations — from dashboards to tables — to aid in answering these questions. The ideal outcome for any higher education analytics team is not only to have provided answers to all the questions identified in the discovery process but also to have provided additional insights that allow for future strategic actions.

The university marketing journey is unique, as there may not necessarily be opportunity for recurring business. Therefore, it’s critical to identify, analyze, and target behaviors that prove successful. By providing analytics surrounding the lead-to-enrollment conversion and applying the same or similar tactics, targeted strategies can provide a pathway to achieve your marketing goals.

For programs that do see repeat students for additional certificates or degrees, understanding what the overall enrollment landscape looks like can assist them in their retargeting efforts. Looking at key behaviors — such as how many students take multiple courses, how long after taking a course a student reconsiders additional education, or what that demographic looks like — can help you appropriately time and design efforts to leverage the current relationships for repeat courses.

Need Help Analyzing Your Higher-Ed Enrollment Data?

Archer Education’s team of data analytics experts is always up to date on strategies to help your program gain visibility in prospective students’ search results and increase conversions. Our higher education analytics tactics can help your university:

- Understand where and how your leads are entering the funnel

- Examine conversions down to the individual ad or keyword

- Pinpoint which channels need to be fostered to bring new leads

To learn more about Archer’s tech-enabled marketing, enrollment, and retention services, contact our team today.